Introducing CinemaCLIP

A hybrid CLIP model and taxonomy for the visual language of cinema

Existing ML models that bridge natural language and vision perform poorly on tasks involving cinematic understanding. This is especially pronounced when comparing ML analysis of cinematic content with that of human domain experts like cinematographers, editors, and directors. At OZU, we thought that this was a missed opportunity - so we set out to build new ML systems that deeply understand the art of cinema and visual story telling.

To understand this gap, we first carefully created a taxonomy of cinematic concepts by working with industry specialists. We then evaluated existing models, as well as our own Cinema family of models against a new set of benchmark datasets.

What we found matched our qualitative assessment: evaluating leading CLIP models against these datasets shows a fundamental gap in their understanding of the nuances of visual grammar. Furthermore, model scale alone does not solve this problem; models up to 28× larger still fail to capture the structure of cinematic visual language. This limits their usefulness in real-world professional video tasks where understanding visual language is critical.

The result of our work is a new model: CinemaCLIP, and a collection of 22 datasets that represent an extensive taxonomy of professional visual language.

CinemaCLIP is a hybrid CLIP model that pairs zero-shot inference with specialised classification heads, outperforming existing CLIP models as well as state of the art Vision Language models in both zero shot inference and one shot classification tasks.

The Problem

Cinematographers, photographers, editors and directors all use very specific language to describe imagery on the job. However their language can be fuzzy, and not all cinematographers and photographers share the same opinion of when a "close up shot" begins and a "medium shot" ends. Furthermore, industry terms of art often attempt to capture multiple competing visual concepts which lead to poor machine interpretability.

Modern models are trained on internet scale data, of which most captions are from non-experts, and do a poor or even incorrect job of describing an image. The result are models which have a fuzzy understanding of these specific terms of art, and are less effective when used in professional contexts.

For many use cases, internet scale data along with correct training formulations result in models that outperform non-expert users. But these models also vastly underperform when compared to trained professionals. If your use case is to build intelligent tools for professionals, this gap in performance matters.

The Gap In Existing Approaches

CLIP learns by matching images to captions. Whether it's the original contrastive loss or SigLIP's sigmoid formulation, the fundamental task is the same: given a batch of images and captions, figure out which caption goes with which image.

Crucially, what's in the caption limits what the model can learn. Existing captions from industry standard datasets capture a sliver of what's meaningful in the image. Specifically, this is an information problem: existing captions don't consistently encode information (e.g. shot size, camera angle, composition), so the model never learns them. The limitation is not the architecture but the dataset.

Unfortunately, the bedrock captions used to train most models (eg LAION) are drawn from ALT tags and - to be blunt - they do a poor job of describing the image. Let's take a look at some examples:

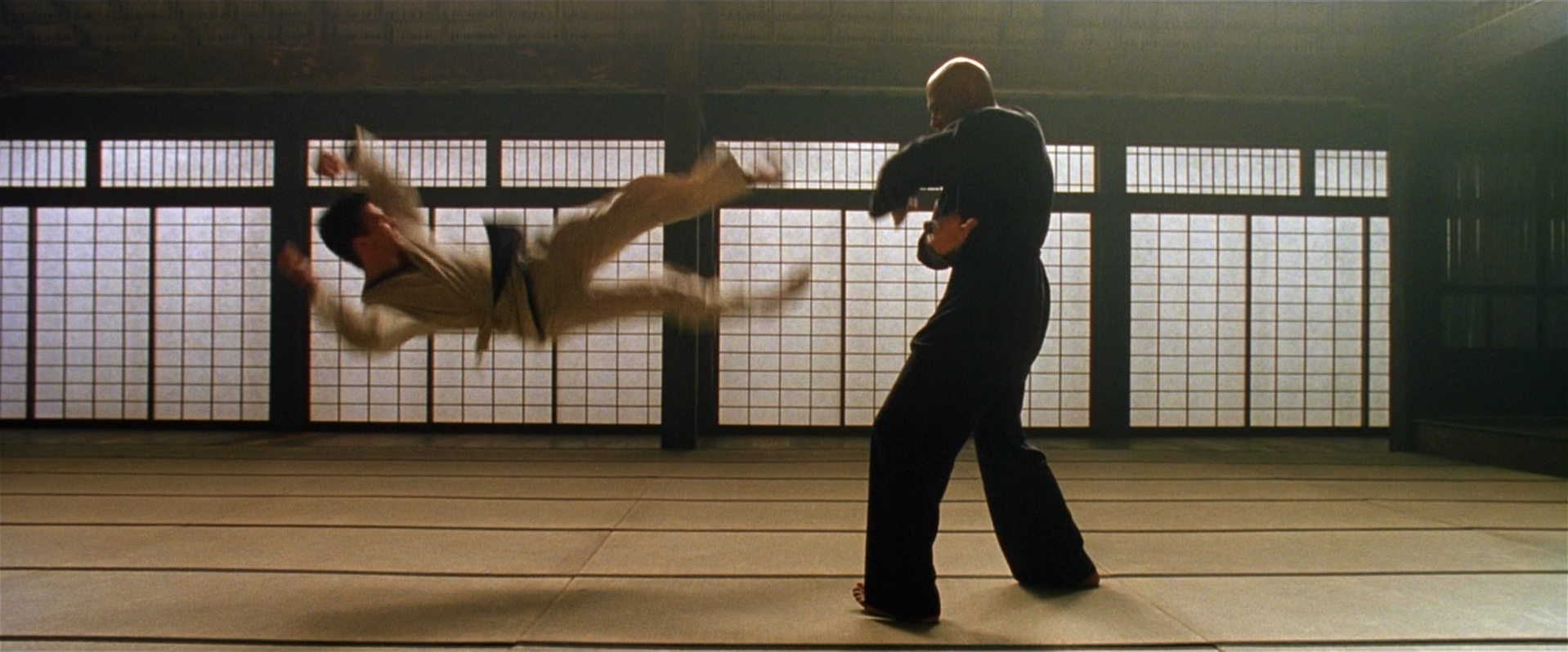

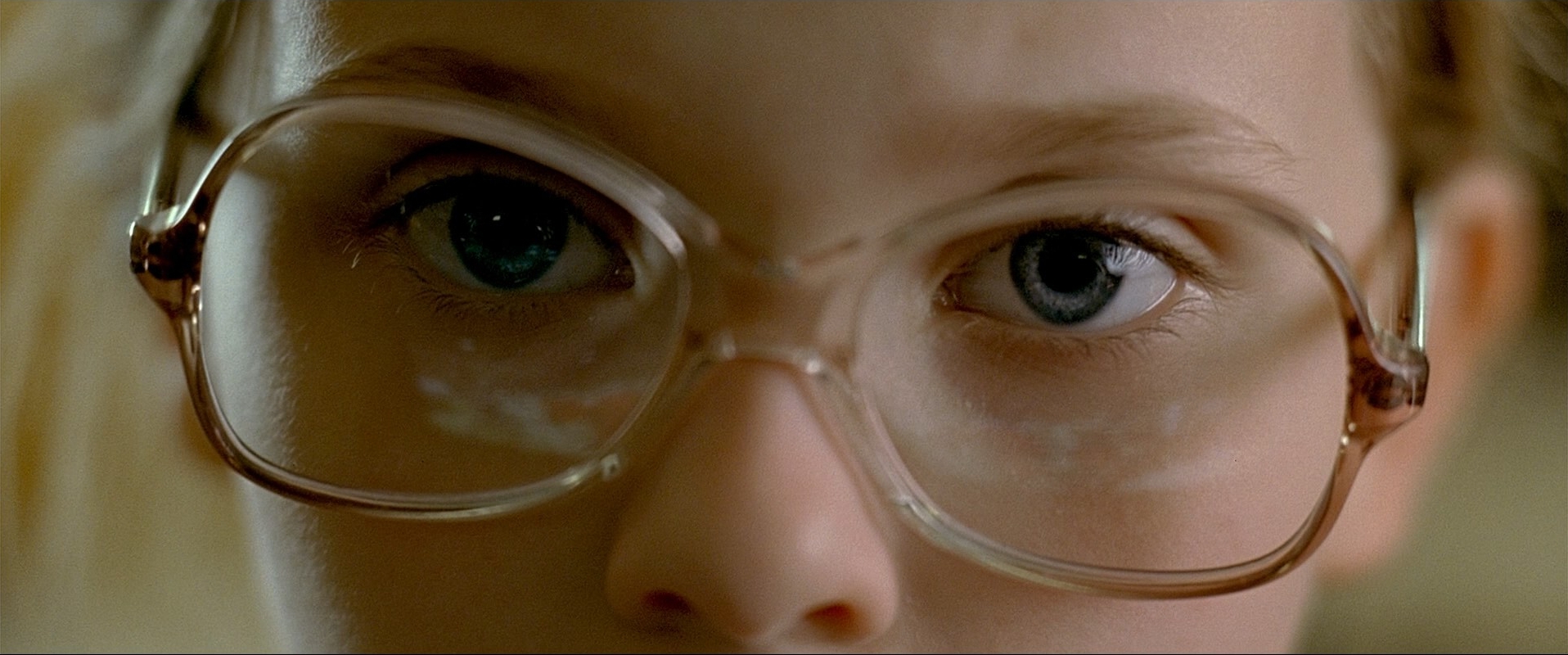

Examples of cinematic vs non-cinematic images from LAION, shown with their original web-scraped captions

The most obvious antidote to this problem is richer captions. LongCLIP showed that most CLIP models have an effective context length of ~20 tokens (a side effect of existing datasets) and demonstrated gains from extending the actual and effective context length. In the VLM space, Pixmo (Molmo) took this further to great effect with exhaustive captions for pre-training:

The image depicts an interior scene from colonial times, rendered in a slightly impressionistic style using oil pastels. Central to the composition is a person seated at either a piano or harpsichord, dressed in a blue suit, playing as several individuals stand around. The scene is set in a large room with plastered walls, displaying various shades of white and blue that reflect different light sources. In the foreground, a woman wearing a colonially styled dress with a white apron stands to the far right, possibly holding another piece of fabric. The group surrounding the seated figure consists of both men and women, many with dark hair pulled back into buns or braids. One figure, distinguished by a tall stature and a braid, stands near a window adorned with blue drapes on the upper right part of the painting. The background features a mix of dark and lighter blue splotches, creating a dynamic and textured effect, contributing to the overall impressionistic aesthetic. Near the center-right portion, there's a table draped with a white tablecloth, possibly supporting a vase or similar object. The composition also includes a white door in the background and a person leaning against it. One prominent character in brown smock and white shirt is pointing, drawing attention to the musical performance or another focal point within the scene. The figures lack intricate facial details, adding to the impressionistic quality of the artwork.

Rich essay length captions from Pixmo and ShareGPT4V-COCO.

To the untrained eye, these captions appear exhaustive, but there's often little to no information about the visual grammar of the image from the perspective of a domain expert / industry professional.

Instead of matching concise captions to images, you're now matching essays to images. While this solves the information problem in principle, it introduces a precision problem: the model can satisfy the objective by matching whichever visual features are easiest, and ignore the rest, without ever learning structured visual concepts.

The Solution: Decomposition

Visual language has a grammar, and this grammar is structured and consists of visual concepts that are mutually exclusive. Instead of combining them all into a single long caption, we treat this as a multi-task problem, running 8 parallel tasks that all attend to distinct aspects of the image.

In other words, instead of asking one question: "what's in this image?", we ask multiple focused questions, such as:

- What is the shot type?

- What is the camera angle?

- What is the lighting style?

- What is the composition?

Each is learned independently, but from the same underlying image.

Not only is this much more readable to the human eye, it's a fundamentally better training formulation. Each task provides a clean, unambiguous training signal, preventing the model from collapsing multiple concepts into a single representation. Adding this structure allows us to learn multiple dimensions of the same image concurrently, leading to a ~14% improvement over the basic single caption formulation. This approach is also compatible with learning negation, offering additional opportunities for the model to learn appropriate features.

Single Caption

Decomposed Captions

| SYNTHETIC | a man in a suit sitting at a table with a bottle of whiskey |

| HUMAN TAGS | serious look, strong stare, whiskey glasses, black suit, black rotary phone |

| COLOR | neutral saturation, analogous color palette |

| COMPOSITION | centered composition |

| FRAMING | medium lens, medium shot, levelled camera angle |

| SHOT TYPE | eye level shot, single |

| LIGHTING | soft lighting, neutral contrast lighting, sidelit lighting |

| LOCATION | indoors |

Breaking down 1 long caption with lots of signal into 8 distinct ones that each capture a unique aspect of the image.

In addition to 8 captioning tasks, we create 23 dedicated classification task heads - a combination of single-label, multi-label, and binary classifiers per concept. The data for these tasks was generated automatically by specialist in-house models we've developed over the past few years. See our CVEU 2021 talk from 2021 for more details.

Taxonomy

Below, you can explore different sections of our taxonomy. There are interactive diagrams on the left side explaining the concepts, and samples from our dataset on the right. We encourage you to click and play around with these to get an intuitive sense of these concepts.

Dataset Size

As seen above, CinemaCLIP is trained on cinematic data. Our dataset consists of a validation set hand labelled (and re-labelled multiple times) by domain experts. We fine tuned domain-specific teacher models for individual aspects of visual grammar (e.g. shot type, lighting, composition), and used them to generate high-confidence labels at scale. Finally, we generated our training datasets via our teacher models. We encourage you to check out our talk at CVEU 2021 for more details regarding the process.

Our dataset consisted of 750k samples with human labelled tags. Another 750k images from DataComp were labelled by our teacher models to maintain a 50-50 split of generalist labels and visual domain expertise to preserve generalist knowledge in the models.

Architecture

Given the use cases for CinemaCLIP, we chose MobileCLIP-S1 - a modern clip architecture that can be deployed on edge devices, and run faster than realtime. During development of CinemaCLIP, we've had the privilege of running inference across petabytes of video archives in various data centers and compute environments.

This process is expensive and time consuming, and running models capable of fast inference on cheaper hardware is a huge win and enables analysis of very large archives in reasonable time for reasonable costs. This also enables local inference, for on set, and even in camera use cases.

MobileCLIP-S1 is designed to run on Apple Neural Engine, a hardware accelerated inference chip shipped with phones, laptops and desktop systems. It balances strong out of the box performance with size.

The capability for on device model deployment and integration into native applications allows inference to run where video resides and on available compute, be it cloud based video archives, local production or post production storage networks, or near-line local device storage.

Fine-Tuning Scope

Given that any pretrained clip model consists of 2 components, a text encoder and vision encoder, we ran ablations to determine how much of each encoder to unfreeze for training. Our intuition is as follows - expert text captions are formulated differently than non expert captions. Additionally, cinematic images have a different distribution - shots are typically darker, and consist of more diverse compositions compared to product shots, advertisements and social media images which dominate the internet scale data.

We curated a subsection of our main dataset and ran ablations across the text encoder and vision encoder to determine which layers to unfreeze.

- Text: Projection + last 2 attention blocks

- Vision: Projection + last 5 attention blocks

These findings scale with larger datasets.

Augmentations

Augmentations were applied only on the text side. Every image augmentation applied may be relevant for one task, but undermines performance in another. Cinematic content is shot with intention, and augmentations destroy the salient information and build invariances where we don't want them.

Model Soup

Finally, we weight blend our resulting model with the pretrained model - 75% of our new fine tuned weights with 25% of the pretrained model. This last step ensures we retain generalist performance. Without it, our fine tuned model alone would perform 1-2% better on cinematic tasks but lose ~10% on general purpose tasks. Alpha mixing allows us to retain 14% better cinematic knowledge with next to no loss in general purpose performance.

Evaluations

Cinematic Evals

Here is a categorical comparison of CinemaCLIP against the leading existing CLIP models and select VLMs across all our cinematography datasets. The solid dots denote zero-shot performance, and the rounded dots classifier performance. Overall, we do better than all compared models in every category.

Generalist Evals

We don't want to over-specialize in cinematic expertise. It's important for our models to retain a wide understanding of general concepts so that existing functionality is retained. To that end, we validate our dataset and multi-task training approach by using existing datasets as proxies for generalist tasks.

Proxies for 'general' knowledge retention. Even though we didn't actively attend to these concepts exhaustively, we improved performance on general tasks users care about.

Note that there are many different groups of concepts within these datasets, like emotion, wardrobe, etc, many of which could command dedicated taxonomies and tasks themselves (and indeed, there are many publicly available datasets in many of these domains), but we lumped them all into two tasks as our focus was teaching CLIP the language of cinema. Coverage of these concepts is far from exhaustive throughout the dataset. Regardless, we saw a notable improvement in accuracy across most of these tasks compared to the pre-trained model. CinemaCLIP retains good generalist knowledge, and we intentionally trade ~7% ImageNet accuracy for significantly improved performance on real-world visual tasks relevant to our users.

Conclusion

CinemaCLIP is one part of a family of models and techniques that we built at OZU to understand the art of visual storytelling, and is the backbone for a suite of tools and processes that power OZU's state of the art narrative understanding systems.

It offers a demonstrable improvement over CLIP and VLM models for many industry professional use cases. Not only is it more accurate in understanding cinematic concepts, it can also be run on commodity hardware on edge, at greatly faster than real-time rates. This enables opportunities like real-time video search and retrieval systems, live camera assist systems, on set validation, and more.

Our key contributions besides the model itself are two-fold: a dataset and ontology of visual language at the frame level, and a novel training recipe to add domain expertise to CLIP models while mitigating catastrophic forgetting. We believe this recipe is generic and can be adapted to other domains as well.

Stay tuned for more.

Select References

- Chen, Lin, Jinsong Li, Xiaoyi Dong, et al. "ShareGPT4V: Improving Large Multi-Modal Models with Better Captions." arXiv:2311.12793, 2023. arxiv.org/abs/2311.12793

- Deitke, Matt, Christopher Clark, Sangho Lee, et al. "Molmo and PixMo: Open Weights and Open Data for State-of-the-Art Vision-Language Models." arXiv:2409.17146, 2024. arxiv.org/abs/2409.17146

- Gadre, Samir Yitzhak, Gabriel Ilharco, Alex Fang, et al. "DataComp: In Search of the Next Generation of Multimodal Datasets." arXiv:2304.14108, 2023. arxiv.org/abs/2304.14108

- Ilharco, Gabriel, Mitchell Wortsman, Ross Wightman, et al. "OpenCLIP." 2021. github.com/mlfoundations/open_clip

- Kang, Raphi, Yue Song, Georgia Gkioxari, and Pietro Perona. "Is CLIP Ideal? No. Can We Fix It? Yes!" arXiv:2503.08723, 2025. arxiv.org/abs/2503.08723

- Koukounas, Andreas, Georgios Mastrapas, Michael Günther, et al. "Jina CLIP: Your CLIP Model Is Also Your Text Retriever." arXiv:2405.20204, 2024. arxiv.org/abs/2405.20204

- Paszke, Adam, Sam Gross, Francisco Massa, et al. "PyTorch: An Imperative Style, High-Performance Deep Learning Library." arXiv:1912.01703, 2019. arxiv.org/abs/1912.01703

- Radford, Alec, Jong Wook Kim, Chris Hallacy, et al. "Learning Transferable Visual Models From Natural Language Supervision." arXiv:2103.00020, 2021. arxiv.org/abs/2103.00020

- Singh, Jaisidh, Ishaan Shrivastava, Mayank Vatsa, Richa Singh, and Aparna Bharati. "Learning the Power of 'No': Foundation Models with Negations." WACV, 2025. doi.org/10.1109/WACV61041.2025.00777

- Vasu, Pavan Kumar Anasosalu, Hadi Pouransari, Fartash Faghri, Raviteja Vemulapalli, and Oncel Tuzel. "MobileCLIP: Fast Image-Text Models through Multi-Modal Reinforced Training." arXiv:2311.17049, 2023. arxiv.org/abs/2311.17049

- Zhai, Xiaohua, Basil Mustafa, Alexander Kolesnikov, and Lucas Beyer. "Sigmoid Loss for Language Image Pre-Training." arXiv:2303.15343, 2023. arxiv.org/abs/2303.15343

Technical Addendum

Note on Generalist Knowledge

Zero-shot accuracy on ImageNet-1K is a popular proxy for measuring a CLIP model's general performance. ImageNet is an object centric dataset and doesn't capture a lot of the nuance our users care about in real world usage. For instance, about 12% of ImageNet consists of dog breeds. There is little to no representation for the color of objects, materials, and textures.

Additionally, zero-shot evaluation on ImageNet uses 82 prompts per class, and many of these use terms that are part of the cinematic language, but used here without intentionality. For example, some of the prompts use the words "close up", "black and white photo" for each concept.

Evaluations

Full per-category accuracy across every model we evaluated. CinemaCLIP's zero-shot / classifier is the top performer in each category. Leading VLMs far outperform leading CLIP models, but despite their larger size and much richer embedding space (VLMs work with a grid of tokens per image, whereas CLIP models operate with a single token embedding per image), they still fall behind CinemaCLIP. To us, this is clearly indicative of the gap in datasets used to fine-tune all these models.

| Category | CinemaCLIP 0-shot | CinemaCLIP Classifier | Qwen3.5-4B | Gemma4-4B | InternVL3.5-4B | Molmo2-4B | DFN ViT-H-14 | MetaCLIP PE-bigG | OpenAI ViT-L-14 | MobileCLIP-S1 | DFN ViT-L-14 | SigLIP2 SO400M | SigLIP2 ViT-gopt | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Color | Contrast | 89.6% | 86.8% | 33.7% | 35.3% | 33.7% | 35.3% | 34.0% | 33.1% | 49.4% | 38.7% | 37.1% | 57.7% | 25.2% |

| Key | 84.9% | 92.9% | 78.1% | 78.1% | 80.3% | 64.3% | 58.2% | 50.2% | 53.2% | 59.4% | 48.3% | 22.8% | 52.6% | |

| Saturation | 82.6% | 82.6% | 66.5% | 65.4% | 72.1% | 45.9% | 55.1% | 61.8% | 58.1% | 35.8% | 46.8% | 33.3% | 31.8% | |

| Theory | 71.3% | 72.7% | 54.0% | 51.7% | 50.7% | 48.7% | 54.7% | 51.7% | 50.7% | 47.3% | 47.7% | 31.3% | 31.7% | |

| Tones | 86.0% | 86.5% | 50.2% | 62.6% | 70.6% | 62.1% | 58.5% | 50.2% | 52.0% | 55.7% | 47.2% | 24.0% | 17.7% | |

| Lighting | Cast | 85.9% | 90.4% | 38.3% | 53.3% | 39.8% | 35.7% | 25.4% | 29.3% | 28.8% | 35.7% | 22.8% | 37.8% | 18.2% |

| Contrast | 93.9% | 95.3% | 29.8% | 39.1% | 38.7% | 46.1% | 35.3% | 35.5% | 32.6% | 39.0% | 39.4% | 48.4% | 37.6% | |

| Edge | 87.6% | 90.4% | 22.8% | 38.8% | 31.2% | 40.4% | 22.4% | 31.6% | 41.6% | 34.0% | 21.2% | 26.0% | 25.6% | |

| Silhouette | 88.4% | 93.1% | 80.9% | 63.0% | 48.9% | 48.8% | 66.6% | 67.1% | 67.4% | 58.4% | 43.5% | 46.2% | 78.9% | |

| Shot | Angle | 73.4% | 82.3% | 41.9% | 49.2% | 33.2% | 49.9% | 28.0% | 13.7% | 19.0% | 19.6% | 25.9% | 21.3% | 17.2% |

| Composition | 95.5% | 96.0% | 46.0% | 54.5% | 55.7% | 60.5% | 27.8% | 24.3% | 21.3% | 22.0% | 25.2% | 31.4% | 11.4% | |

| Dutch Angle | 61.9% | 78.5% | 62.2% | 65.1% | 46.7% | 49.3% | 27.3% | 44.5% | 38.4% | 56.6% | 25.9% | 47.6% | 68.7% | |

| Focus | 71.3% | 71.2% | 19.9% | 26.6% | 26.3% | 25.1% | 32.9% | 31.2% | 24.4% | 31.3% | 37.3% | 48.2% | 12.6% | |

| Framing | 79.2% | 83.8% | 38.0% | 29.6% | 40.1% | 34.6% | 33.6% | 24.9% | 23.5% | 23.9% | 33.0% | 7.3% | 9.8% | |

| Height | 90.5% | 91.8% | 38.1% | 37.4% | 41.2% | 53.0% | 37.6% | 33.7% | 28.9% | 24.0% | 33.6% | 29.6% | 23.9% | |

| Lens Size | 67.9% | 70.6% | 49.6% | 28.0% | 43.6% | 46.6% | 32.1% | 28.0% | 34.5% | 30.1% | 25.7% | 30.1% | 17.6% | |

| Location | 90.9% | 93.9% | 81.0% | 82.2% | 81.5% | 79.2% | 73.0% | 68.4% | 68.0% | 75.6% | 66.1% | 65.0% | 46.7% | |

| Symmetry | 88.3% | 92.9% | 90.2% | 86.7% | 76.0% | 80.2% | 76.6% | 78.0% | 54.0% | 39.3% | 24.9% | 46.0% | 82.4% | |

| Time of Day | 69.2% | 89.0% | 75.1% | 66.1% | 70.7% | 70.7% | 68.1% | 69.6% | 60.3% | 73.7% | 71.2% | 48.5% | 42.7% | |

| Type | 81.8% | 90.5% | 81.3% | 61.2% | 57.0% | 57.4% | 52.8% | 40.4% | 36.5% | 35.7% | 56.7% | 46.5% | 29.7% | |

| Type - Is Crowd | 91.5% | 99.6% | 97.2% | 88.2% | 94.3% | 94.8% | 55.9% | 69.1% | 68.6% | 77.2% | 37.3% | 52.4% | 69.3% | |

| Type - OTS | 92.0% | 95.5% | 92.5% | 85.0% | 83.9% | 87.6% | 53.2% | 57.0% | 73.9% | 60.3% | 42.1% | 50.5% | 51.2% | |

| Mean | 82.9% | 87.6% | 57.6% | 56.7% | 55.3% | 55.3% | 45.9% | 45.2% | 44.8% | 44.2% | 39.0% | 38.7% | 36.5% |

Ablations

Model Soup

We tried blending our fine-tuned model with the pre-trained one at various alphas and found that 0.75 (75% fine-tuned weights, 25% pre-trained) was the best combination. Not only did we perform better at ImageNet, which was expected, but we also had superior zero-shot performance on cinematic tasks. The classifier heads were slightly worse (88% vs. 89%), which was a reasonable tradeoff.

| ImageNet 0-Shot Top-1 | Cinematic 0-Shot | Cinematic Classifiers | |

|---|---|---|---|

| α = 0.5 | — | — | — |

| α = 0.75 | 63.4% | 82.2% | 87.5% |

| α = 1.0 | 57% | — | 88.5% |

Teacher Model Labelling Threshold

Our 23 teacher models, all classifiers, provided labels for 6/8 tasks. Being classifiers, we experimented with the confidence threshold at which we decide to use the model's prediction as part of the caption. Higher confidence levels meant more high quality labels, but too high a confidence would lead to too many empty captions. In practice, 85% worked best.

| Threshold | Cinematic 0-Shot |

|---|---|

| 0.0 | 74.9% |

| 0.5 | 79.5% |

| 0.6 | 79.0% |

| 0.7 | 80.2% |

| 0.8 | 81.0% |

| 0.85 | 82.2% |

No. of Contrastive Tasks

We experimented with the ideal no. of tasks to include in our multi-task formulation. Generally, adding more tasks led to better performance. Beyond 6 cinematic tasks, there were no notable gains, and with the confidence thresholding mentioned above, we ended up with over 40% of a batch having empty captions. For our formulation, 6 was the right balance. If one were to extend this to more domains, we expect performance to generally increase as long as they are well formulated. We only added expertise in the cinematic domain, and it'd be interesting to see how far this can be pushed - could we train models with a hundred domain expert tasks at once?

| No. of Captions | ImageNet 0-Shot Top-1 | Cinematic 0-Shot |

|---|---|---|

| 2 | 65.6% | 72.4% |

| 3 | 65.4% | 72.8% |

| 8 | 63.4% | 82.2% |

| 22 | 60.5 | 82.7% |

Batch Size

Our effective batch size was 1,152 images with 9,216 captions per batch. We were bound by our hardware constraints (3x RTX 3090s w/ 24GB VRAM each) and were unable to test if performance increases further with larger batches. Most CLIP research and practitioners' experiences suggest that larger batch sizes are better, but there hasn't been a systematic study of the effect of batch size when fine-tuning CLIP models.

Training Dynamics & Hyperparameters

Fine-tuning CLIP models can be fiddly. Many parameters need to be tuned specifically to the architecture you're training. We needed to be judicious about the areas we dove deeper into. We were systematic about some ablations, as elaborated above, and lacked bandwidth to go deeper on other hyperparameters. Here is our assessment of the most significant hyperparameters:

| Parameter | Value | Notes |

|---|---|---|

| No. of layers fine-tuned in the vision encoder | 6 | Sweet spot for MobileCLIP-S1. Tuning more layers meant losing too much general knowledge for a marginal gain in cinematic understanding |

| No. of layers fine-tuned in the text encoder | 3 | |

| Learning rate | 2e-5, 2e-3 | For the CLIP image & text encoders, and the classifier heads respectively. We used Leslie Smith & FastAI's LR finder technique to find the ideal range. Did not experiment with differential learning rates for earlier layers |

| Alpha blending ratio (1=full fine-tune, 0=pre-trained) | 0.75 | Slightly better zero-shot performance on cinematic tasks, +7% on zero-shot ImageNet, and only a 1% drop in classifier accuracy compared to the fully fine-tuned model |

| Data ratio between pre-trained (DataComp) data and new cinematic data | 50% | Critical to maintaining general knowledge. We experimented with a few different ratios that seemed sensible while, and 50-50 was the best balance. All the data (ours + DataComp) was annotated with cinematic labels by our teacher models. |

| Confidence threshold of auto-labelled tags | 0.85 | 0.85 led to about +10% better performance on cinematic tasks as the training data was more precise. Varying this had no impact on non-cinematic performance |

| No. of contrastive training tasks | 8 | 6 cinematic + 2 'general' tasks. Grouping our 22 cinematic tasks into 6 captions led to a +14% improvement over singular captions. Could be extended further to other domains. |

| No. of epochs | 3 epochs | Training for longer meant losing too much prior knowledge for a marginal gain in cinematic understanding. We report epochs and not no. of steps because this observation is based on no. of unique images the model's seen. We saw similar trends with smaller datasets. |